Real,device :: Adev(M,N) ! Adev instantiated in GPU memoryĪdev = A ! Copy data from A (host) to Adev (GPU)Ī = Adev ! Copy data from Adev (GPU) to A (host)Īs a result of these features, CUDA Fortran programs can be written mostly in natural Fortran without many runtime library calls. Real :: A(M,N) ! A instantiated in host memory Similarly, we can leverage Fortran 90 array assignment syntax to effect data copies from host memory to GPU device memory, and back.

A PINNED attribute can be used to specify host arrays to be placed in CUDA pinned memory. In addition to using the DEVICE attribute to specify data that should reside on the GPU device, SHARED and CONSTANT attributes can be used to place data in CUDA shared or constant memory respectively. Real,device,allocatable :: B(:,:) ! B is allocatable in GPU memoryĪllocate(B(size(A,1),size(A,2)),stat=istat) ! Allocate B in GPU memory Real,device :: A(M,N) ! A instantiated in GPU memory

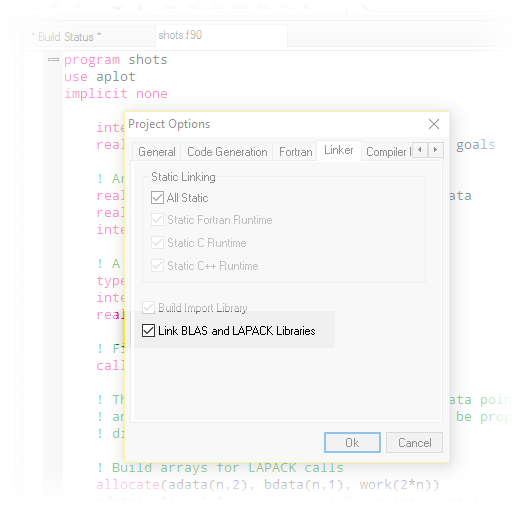

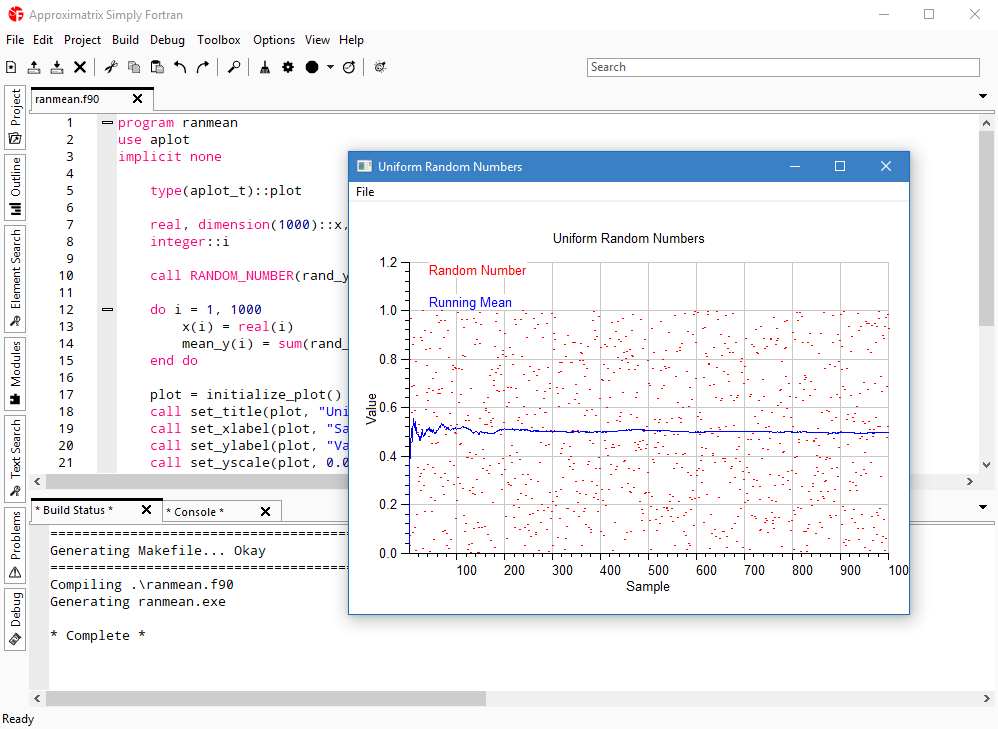

Rather than using a special data allocation function for GPU device data allocation, we can use a combination of F90 attributed declarations and allocate statements to create device-resident data: Three features of Fortran 90 were leveraged to enable use of more natural language constructs in CUDA Fortran: attributed declaration syntax, the allocate statement, and array assignments. Insert CUDA function calls to free GPU device memory Use CUDA grids/thread blocks to launch GPU kernel functions Isolate innermost loop code into GPU kernel functionsĬonvert loop nests into implicitly parallel grids/thread blocks Insert CUDA function calls to copy host data to the GPU, and back Insert CUDA function calls to allocate data in GPU device memory Those of you familiar with CUDA programming know the following basic steps for creating a CUDA C program: This article will teach you the basics of CUDA Fortran programming and enable you to quickly begin writing your own CUDA Fortran programs. A properly designed CUDA program will run on any CUDA-enabled GPU, regardless of the number of available processor cores. Each block is partitioned into fine grain threads, which can cooperate using shared memory and barrier synchronization. Invocation of GPU subroutines from the hostĪ CUDA programmer partitions a program into coarse grain blocks that can be executed in parallel. The extensions allow the following actions in a Fortran program:ĭeclaration of variables that reside in GPU device memoryĭynamic allocation of data in GPU device memoryĬopying of data from host memory to GPU memory, and back CUDA Fortran is a small set of extensions to Fortran that supports and is built upon CUDA. PGI and NVIDIA defined CUDA Fortran, which is supported in the upcoming PGI 2010 release, to enable CUDA programming directly in Fortran. The CUDA programming model supports four key abstractions: cooperating threads organized into thread groups, shared memory and barrier synchronization within thread groups, and coordinated independent thread groups organized into a grid. The CUDA SDK includes an extended C compiler, here called CUDA C, allowing GPU programming from a high level language. NVIDIA CUDA is a general purpose parallel programming architecture with compilers and libraries to support programming of NVIDIA GPUs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed